How Common Index built An Ecology of Beasts on Daydream

For seven days at Milan Design Week 2026, Common Index ran a generative landscape that responded to visitors and weather in real time, with no on-site GPU. Here's how An Ecology of Beasts came together on Daydream's hosted StreamDiffusion.

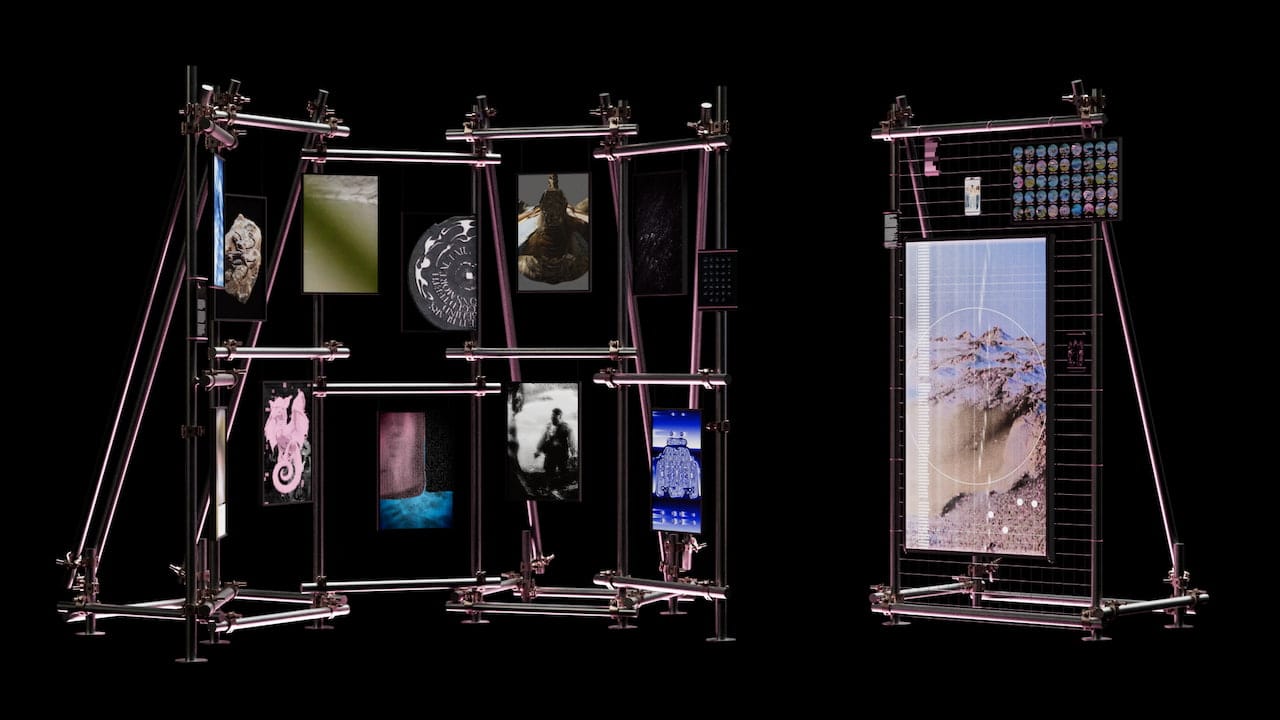

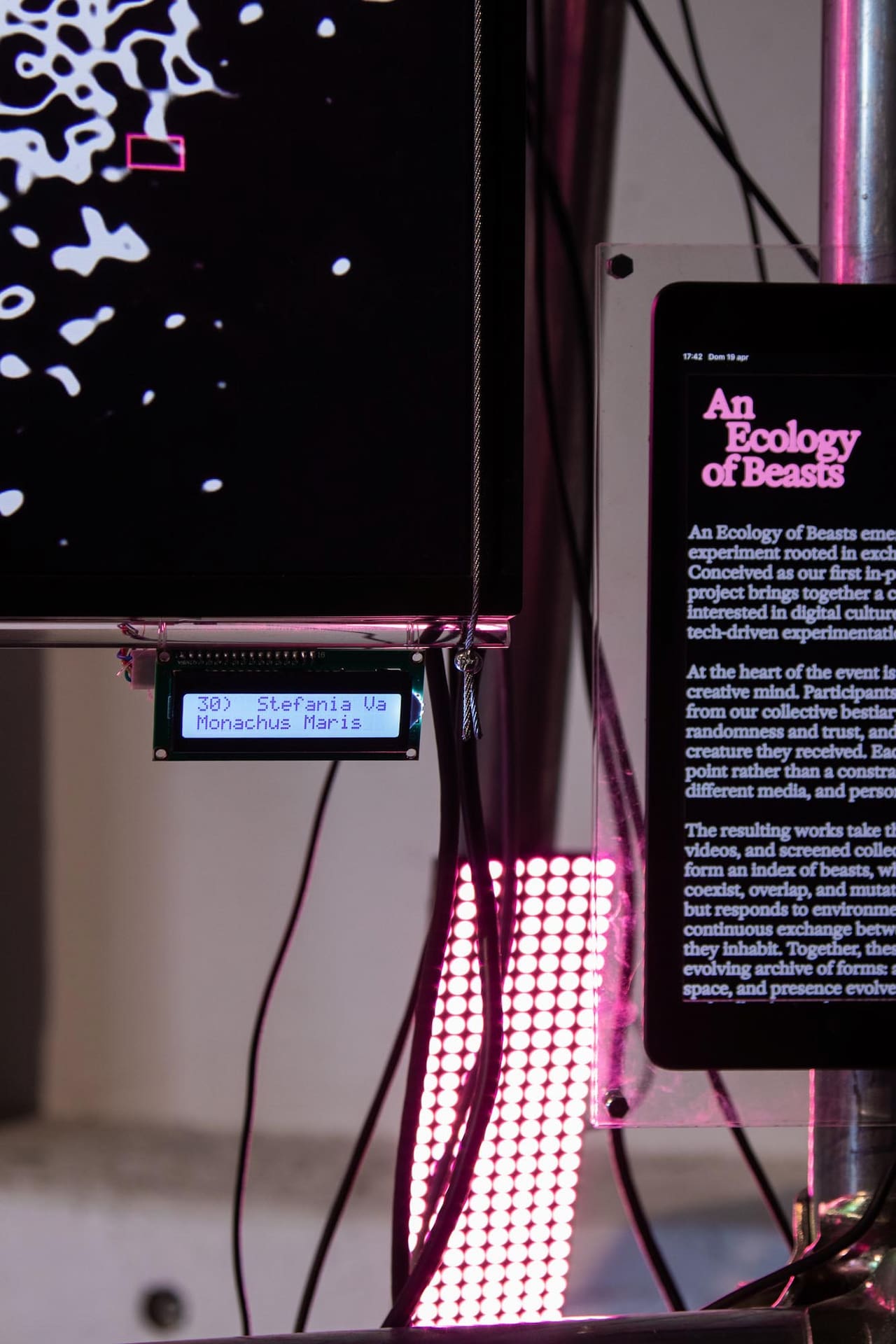

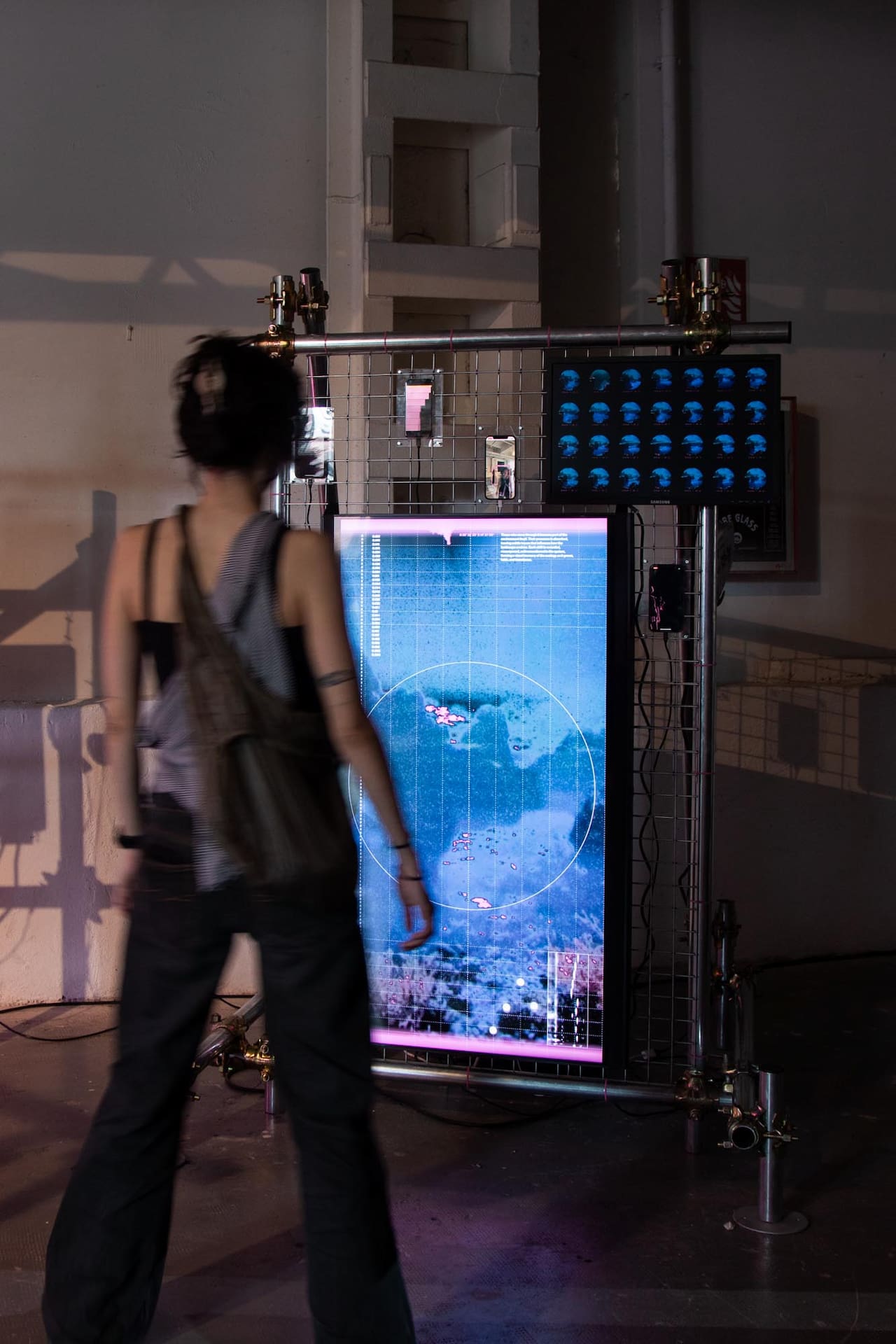

You walk into the industrial spaces at BASE Milano during this year's Milan Design Week. Metallic structures rise from the ground. On one side, vertical screens hang from a frame, cabled together, headphones dangling between them.

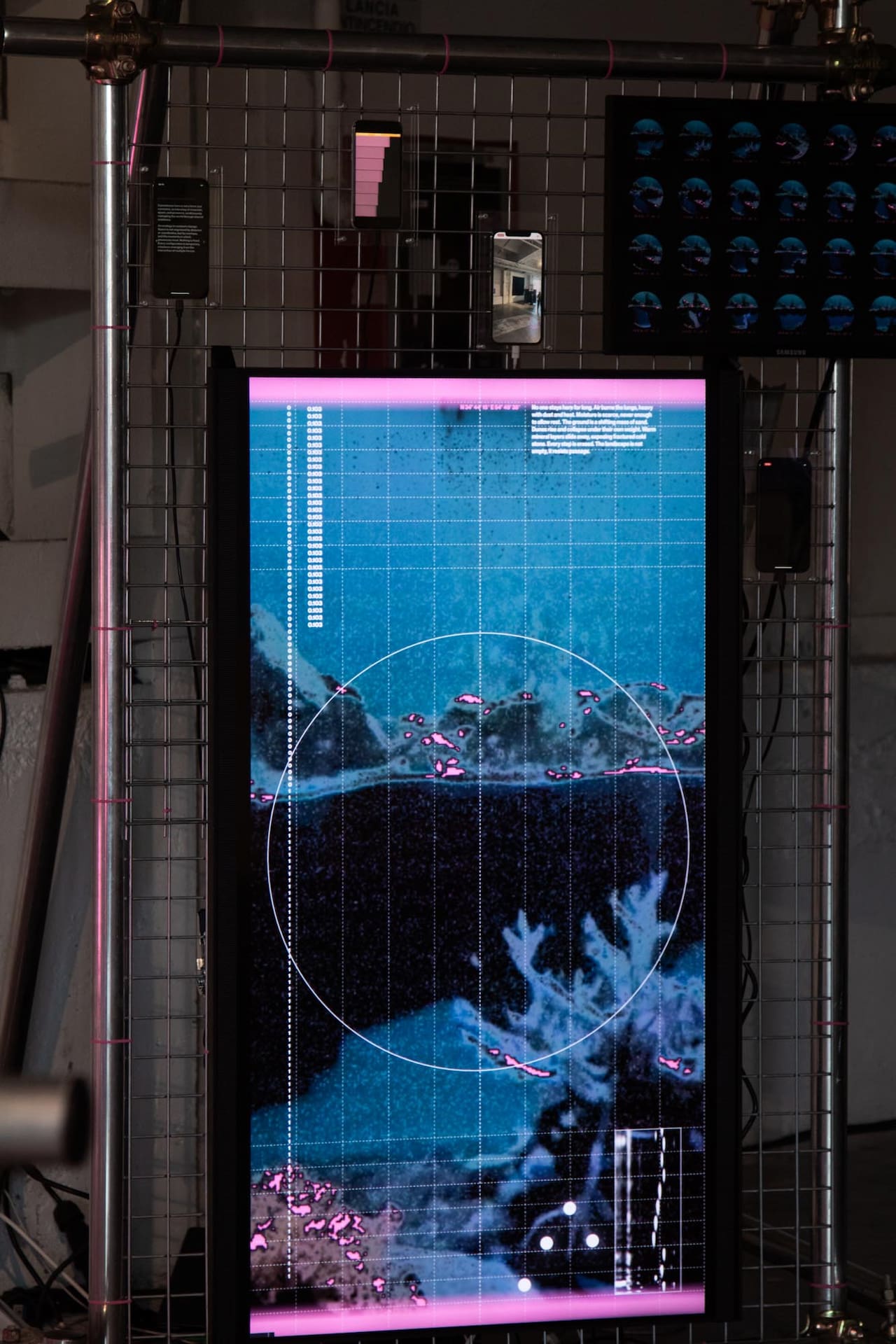

On them, vertical videos play in rotation: beasts pulled from a medieval bestiary and reinterpreted by 44 different artists. On the other side, a standalone structure hosts a landscape always moving. Mountains push up, water finds new edges, and vegetation thickens or thins. None of it loops, and none of it was rendered ahead of time. It responds, in some way you can't fully read, to the people in the room and the weather outside.

Here is where Daydream came in.

The project is called An Ecology of Beasts, and it was built by Common Index, a Milan-based collective working at the intersection of design, technology, and shared practice. We powered the generative layer of their interactive installation, Cartography of Entangled States, through hosted StreamDiffusion on the Daydream API.

Who Common Index is

Common Index is Carlotta Bacchini, Dorsa Rafiee, Pietro Forino, and Simone Restifo Pilato.

Their manifesto opens with a line we keep coming back to: "Common Index is a device for design inquiry, collective making, and digital experimentation. Not for impact, but for care."

If you spend any time with their work, that closing pair holds up.

The whole structure of An Ecology of Beasts is built around making space for other people's voices instead of resolving everything into a single curatorial statement. They typically run open calls instead of selections. They split projects into interconnected components instead of finished artworks. And what we really enjoyed - they usually write about their process in essays, not press releases. We gravitated toward them because that posture matches how we think about Daydream: infrastructure should let other people do their best work, not get in the way of it.

The two layers of An Ecology of Beasts

The project rethinks the medieval bestiary as a system rather than a catalog. In a real bestiary, the meaning of a creature came from how it sat next to the others. Behavior, environment, and relation were the unit, not the individual figure.

Common Index translated that into two interconnected components.

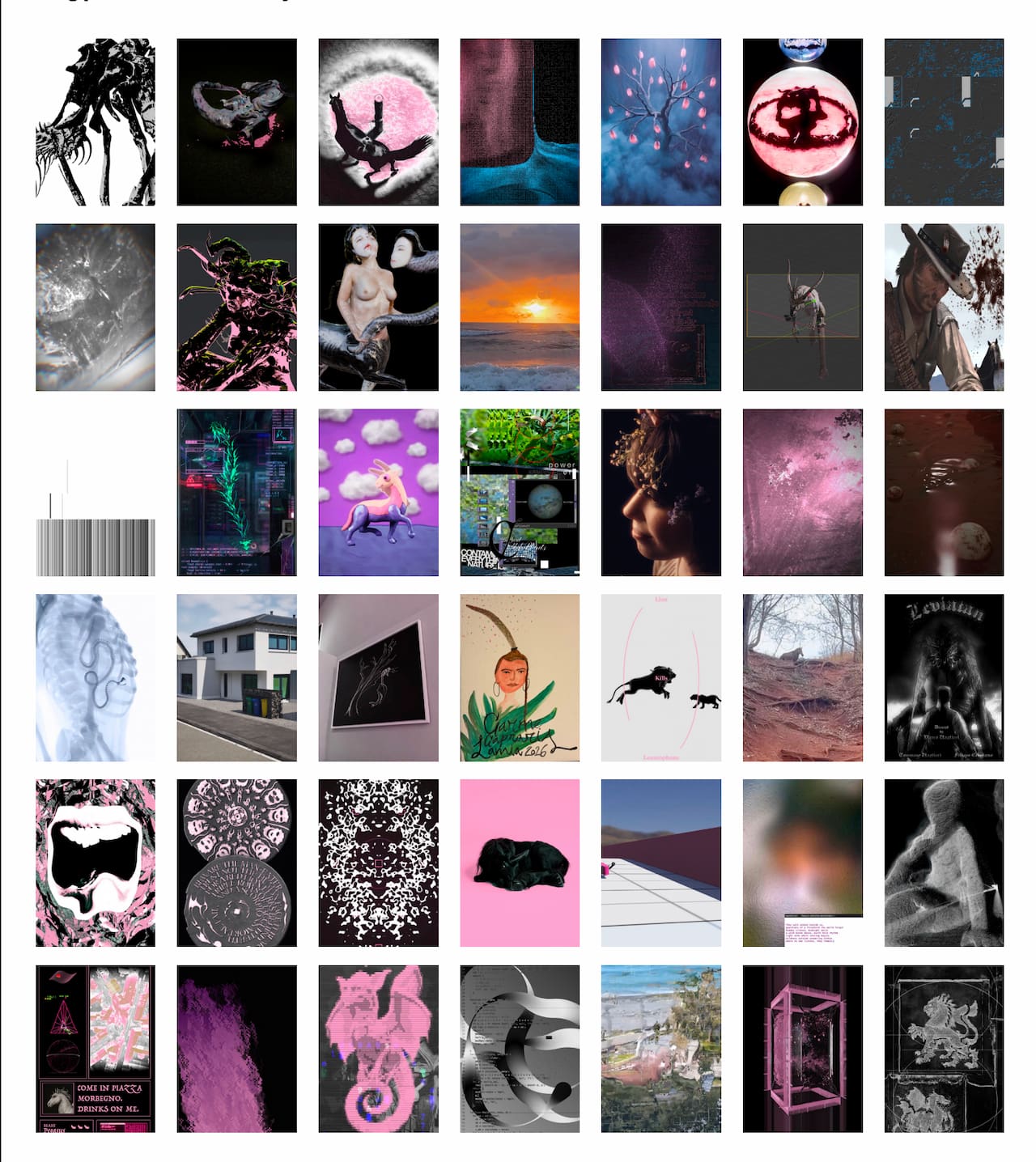

The first is the Collective Bestiary: an open call where 44 participants drew a creature from a custom-designed deck of 42 medieval beasts, from Amphisbaena to Zitius, then reinterpreted it however they wanted. The only constraint was that the output had to be a vertical video. Designers, filmmakers, developers, and researchers all submitted. The works coexist on the screens in the space, rotated by a non-linear narrative: the creatures respond to inputs captured via external sensors, which allows them to assert themselves based on their own characteristics, as well as a democratic rotation system. As a consequence, there is no curation flow or theme grouping, nor any hierarchy.

The second is Cartography of Entangled States, the environmental counterpart. Where the bestiary gives shape to individual imaginaries, this installation gives shape to the world the beasts inhabit. The reference is to medieval mappae mundi: maps in which geography, theology, and mythology share the same surface, and in which places appear next to each other based on relation rather than coordinate distance.

Fra Mauro's Mappa Mundi from around 1450, the Psalter World Map from around 1265, and the Hereford Mappa Mundi from around 1300. Common Index translated that approach into a real-time digital landscape: zones of erosion, darkness, origin, and accumulation, all coexisting and shifting.

This is the screen running on hosted StreamDiffusion.

What the installation actually does

The Cartography is a continuously generated landscape that never settles. Inputs feed it from two directions.

From inside the room, presence detection, movement detection, and the overall sound level are all read live.

From outside, weather APIs return temperature, humidity, and precipitation data, connecting the installation to atmospheric conditions beyond the exhibition itself. Common Index wrote that these signals act as "underlying forces, allowing the landscape to evolve as a composite response to presence, activity, and surrounding conditions." The landscape reacts, but visitors don't drive it deterministically. You can feel that you're inside the system without ever quite seeing your own gesture come back at you.

The system also accumulates. Temporary states are captured and recomposed over time, forming a visual memory of the room. The installation works as both a living ecosystem and an archive, a dual function it shares with a medieval bestiary. Their dossier shows grids of these snapshots: dozens of landscape configurations stamped with date and time, each one a momentary equilibrium that already no longer exists by the time you've read its timestamp.

Why the cloud route made sense for this work

If you've built anything in TouchDesigner that uses diffusion-based generation, you know the local-render rig is real engineering. You're committing a high-end GPU to the venue for the duration of the show. You're managing thermals and uptime for however many days the exhibition runs. Any creative iteration during install means you're doing it on the same hardware that's about to run the installation continuously. For An Ecology of Beasts, that show was seven days at Milan Design Week.

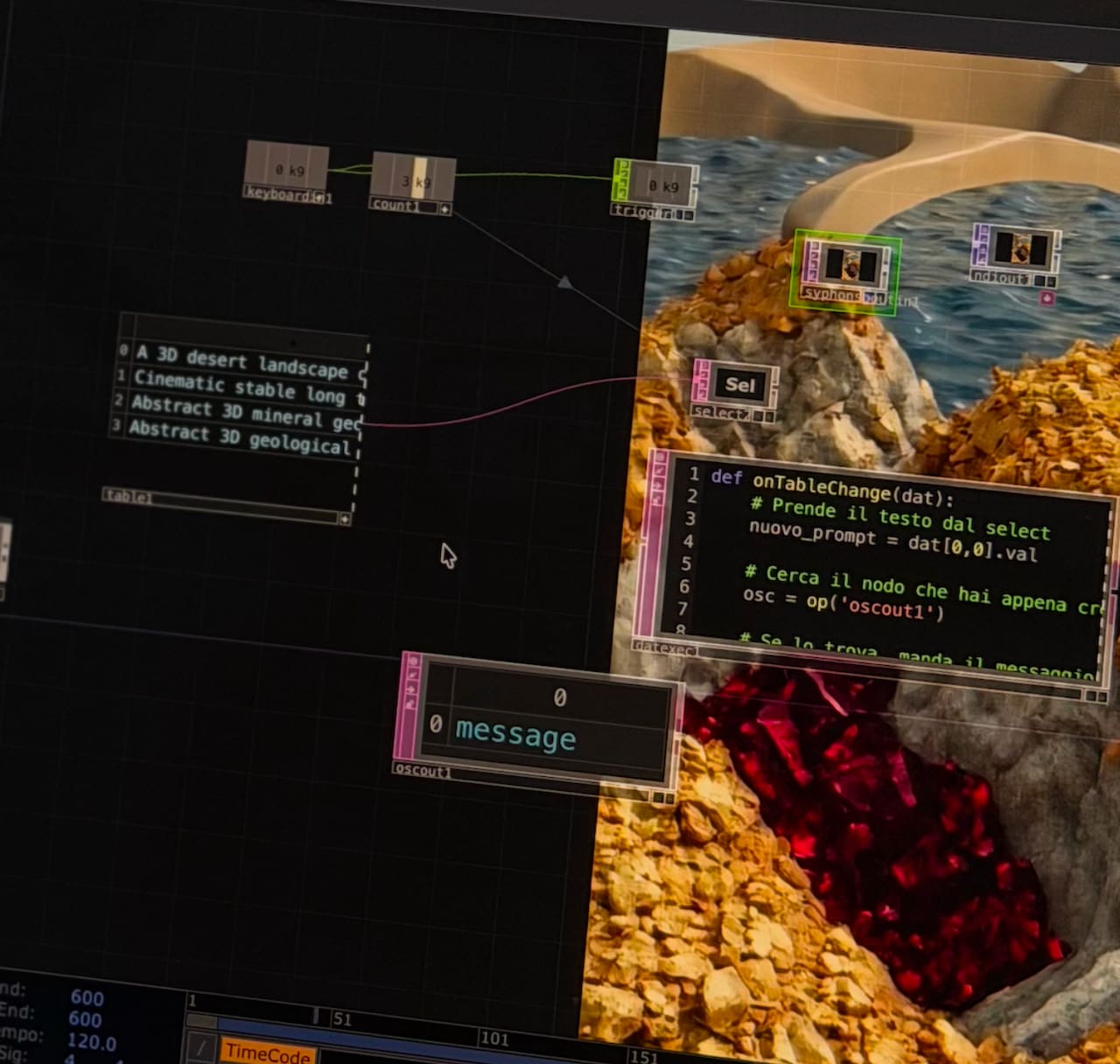

So Common Index didn't go that route. They sent their inputs from TouchDesigner to our API, we ran the diffusion model on our infrastructure, and the frames came back to TouchDesigner where their project composited them with the grid, coordinates, and overlay graphics you can see in the photos.

A few things this changes in practice. The render machine isn't on site, so the venue setup consists only of displays and sensors. Iteration during testing doesn't compete with production capacity, because there isn't a single fixed GPU to compete over. Stability during a week-long continuous run becomes our problem, not theirs. And on the creative side, the hardware ceiling that usually shapes what you can attempt with diffusion in a live context just lifts.

Common Index put it more directly than we would, in their LinkedIn post about the show:

Their open-source cloud infrastructure gave us the freedom to stay fully inside the concept. The flexibility of their tools allowed us to integrate them seamlessly with almost no effort from our side. From them, we received pretty infinite computation time for testing and exhibiting the installation, but above all, we received constant support at almost every time of the day.

That last line is the part we care about most. Generative systems for live exhibitions are the kind of project that might need attention at 11 pm the night before opening, and the partner you want is the one who picks up the call.

How hosted StreamDiffusion fits with TouchDesigner

If you've worked with StreamDiffusion locally, the cloud path is mostly the same shape, just with the model elsewhere.

Hosted StreamDiffusion is our managed version of the StreamDiffusion pipeline, running on the Daydream API. It went live a while ago and has been quietly powering work like Common Index's since.

The model itself runs in our cloud, so if you're on a Mac or an underpowered Windows machine, StreamDiffusion finally runs on your hardware. It works great with the StreamDiffusionTD plugin built by Lyell Hintz (dotsimulate), which stays exactly where it's always been, inside TouchDesigner.

The mental model is straightforward. TouchDesigner stays in the place where you do your concept work, scene composition, sensor wiring, and output. The diffusion happens elsewhere.

You send parameters and source frames to the API, you get processed frames back, and the rest of your TD project doesn't have to know or care that there's a cloud GPU in the loop.

For Common Index, this meant their sensor-to-landscape logic stayed inside the TouchDesigner project where it belonged, and the model layer was just one more node away.

What this points at

Common Index is going to keep building. They are interested in exploring and using AI tools in unconventional ways. What does that mean? Essentially, they are exploring integration of AI in different parts of the process - going beyond only generative AI for images, and getting closer to (and involving) real-time AI in the interaction process itself.

And as for Daydream, we're going to keep building too. Hosted StreamDiffusion is one of the pieces of the Daydream API, and projects like this one tell us a lot about what installation artists actually need from the model layer when they're not just demoing in a studio but committing to a public, continuous run.

If you're working on something similar, the integration path is well-trodden: TouchDesigner, the StreamDiffusionTD plugin from dotsimulate, and the Daydream API. You can read more about what hosted StreamDiffusion does and why it exists in the foundational post on the Daydream blog. If you want to talk through a specific install, you can grab the API directly at daydream.live/streamdiffusiontd or drop us a line in our Discord. We tend to be around.

The bestiary was on the small screens. The cartography was on the bigger one. Somewhere in there, a model was running on our infrastructure, generating a landscape that nobody who walked through that room will ever see again.

An Ecology of Beasts is a project by Common Index: Carlotta Bacchini, Dorsa Rafiee, Pietro Forino, and Simone Restifo Pilato. Exhibition design by Sole° (Asia Trianda, Nicolò Cozza), with engineering support from R4M Engineering.

The workshop "Sensing Beyond the Human" was led by Giacomo Baccega. Presented at BASE Milano, April 20-26, 2026, as part of We Will Design 2026, HELLO DARKNESS.

Generative and computational support: Daydream.